*But at the cost of ethics, theft, racist algorithms, invasion of privacy, and violation of rights?

by Mary Louise Malig

Read the publication in full here

Contents:

Introduction

I. What is Artificial Intelligence?

II. The controversy of AI art theft

III. The AI “arms race”

IV. The urgency for policies to protect people

V. Afterthoughts

Introduction

Science fiction books, movies and pop culture have made the term or concept “artificial intelligence” or AI, part of the everyday vernacular. Movies have featured vast possibilities of AI from super machines planning the demise of humanity to AI that have become sentient, conscious and able to feel joy, sadness and pain. The popular movies of the Marvel Avengers showcased as one of the supervillains they had to fight as a sentient AI bent on world destruction. Interestingly, since the AI villain had access and control over all of the internet, the heroes had to work offline and do things the “traditional” analogue way. We also have movies of human beings falling in love with AI “beings”, or AIs with superintelligence that could surpass humans. Or “artificial general intelligence” – which is not exactly the same as AI but rather a step beyond AI because it is supposedly shown to be just as intelligent and capable as a human, well beyond something that can be programmed. The point being is that the list can go on about the world of fiction that has created a mythology and a blending of fact and fiction surrounding not only artificial intelligence but computers, robotics, automation and a whole host of other technologies. This has also served in creating a fascination with both the potential and the dangers of the future of AI.

However, out here, in the real world, AI is neither fiction nor an idealized figment of someone’s imagination. Artificial Intelligence or AI for short is generally defined as the general ability of computers to emulate human thought and perform tasks in real-world environments – such as perceiving, analyzing, understanding and collating for synthesizing. This may include language models, faster generation of computer tasks, computation of complex mathematical problems, machine learning, analyzing of big data and more importantly, supposedly using these “learnings’ and data to then develop its own “intelligence”. Some advocates of AI say their goal is to create “artificial general intelligence” that refers to a type of ability of the AI to be just as intelligent as a human – now, whether that will be for the good of humanity or the end of it – is a question posed by some AI engineers themselves and many others who are grappling with and whether or not the current trends of AI tools coming out now are actually doing good or potentially causing harm will be discussed later in the publication as it delves deeper into the current and fast developing new systems of AI. As will also be explained further in the publication, several prophesied future abilities of AI are clearly not here yet and that the media frenzy on the recent developments of the language programs are greatly hyped up and according to some experts will state that after reviewing these program, they are not as “intelligent” as proponents claim them to be. This publication first sets out to separate fact from fiction around AI and the technologies around it. This first main goal is to break things down from technical terms to technological advancements and situate them into easy to understand ways, as one can be surprised that many of these supposedly difficult or too technical things are already in things one uses in daily life such as in smart phones, digital platforms, banking and many more others. And although this first chapter may have technical terms that may seem daunting, this should not at all intimidate but rather the

opposite, it should be seen as an entryway into having a better grasp of the new technologies especially since many of these are actively used in the digital economy, which, most everyone is a participant of. Knowledge and understanding of artificial intelligence and the current capabilities that are already being implemented and the potential capacities, is crucial to one then being able to understand that some of these capabilities are able to be of great service and already, examples exist of these being able to help humans in the fields of information sharing, healthcare, and the banking sector among other things. Equally important is one understand that some existing AI technologies are also able to cause harm. Already, examples exist of algorithms tested for use for credit checks or “scoring people on benefits and calculating the fraud risk of benefits recipients”[1] that ended up having biased results. These inequities, harmful bias and discrimination, have even begged the question and deep re-examination of whether these biases are already originally embedded into these algorithms because of inherent biases that already exist amongst programmers, engineers or others. These are crucial to know, in which ones are helping and which ones are harming and that one needs to be acting to stop this bias and see actions that can be done to call on policies that protect peoples’ rights.

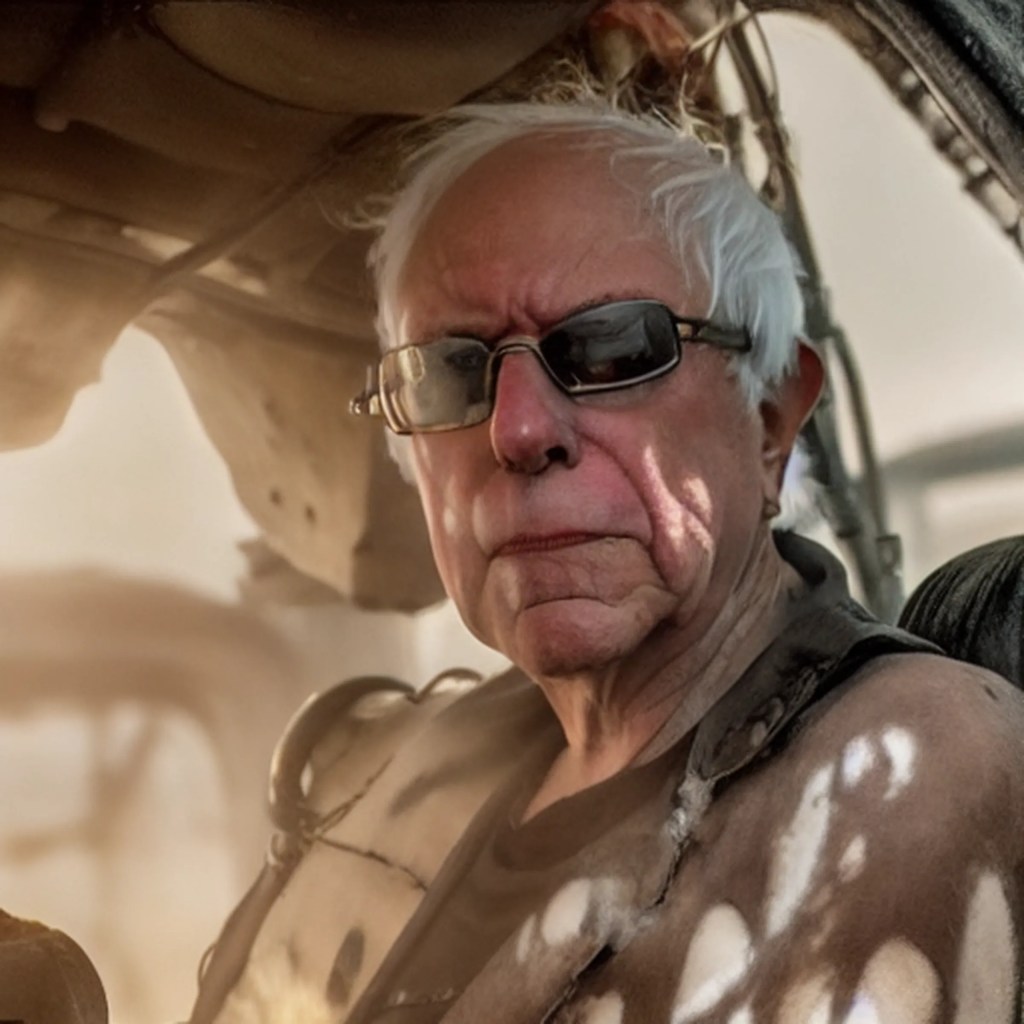

Also part of the first chapter is the relationship between AI and the digital economy. The reason the digital economy and the potential of AI in it is looped in together in this lens is to remind the readers to keep in mind that whatever developments this technology reaches, it has a concrete impact on the digital economy. The potential then of a more powerful, more intelligent and more capable AI can also increase the potential of more effective and faster ways of extracting data from its source which then increases the capacities and capabilities of the platforms that use them. A whole new world of potential for expanding the ways and means of extractivism beyond just data. The second chapter delves into the ongoing controversy of an AI product being used by the internet using public to “generate art” that produces output using original, copyrighted artwork by artists from the indie to the very well-known, most if not all, without consent or recognition. Worse, the algorithms are training on data roughly the size of 6 billion images scraped from the internet complete with artists’ signatures and copyright watermarks by a supposed non-profit called LAION. As it states in its self-description: “The Large-scale Artificial Intelligence Open Network (LAION), (a German) non-profit organization, provides datasets, tools and models to liberate machine learning research. By doing so, we encourage open public education and a more environment-friendly use of resources by reusing existing datasets and models.”[2] Through their non-profit status, LAION was able to generate LAION-5B: “a dataset of 5.85 billion CLIP-filtered image-text pairs, 14x bigger than LAION-400M, previously the biggest openly accessible image-text dataset in the world.”[3] The AI “art generator” called Stable Diffusion has trained on and accesses this massive data set to generate images from text. How it works: one goes to Stable Diffusion, type in key words of what image or “artwork” one would like to generate, such as: “Bernie Sanders in Mad Max Fury Road” see results below.

An image created by Stable Diffusion from the software’s subreddit. The exact text description used to create the image was “Photo of Bernie Sanders in Mad Max Fury Road (2015), explosions, white hair, goggles, ragged clothes, detailed symmetrical facial features, dramatic lighting.” Image: Reddit / Licovoda

https://www.theverge.com/2022/9/15/23340673/ai-image-generation-stable-diffusion-explained-ethics-copyright-data

Notice how one can recognize the United States Senator Bernie Sanders immediately and the elements of the 2015 movie Mad Max Fury Road are easily recognizable. It does not stop there, the public have been using Stable Diffusion, the AI tool: text to image generator, to include artists – from the unknown, independent struggling artist to the just making it artists who are doing well enough for it to be their livelihood to the extremely well-known artists as key words in their text to image instructions. This they can do because of the combined nearly 6 billion images worth that Stable Diffusion has trained on and the fact that the algorithms are specifically programmed to not only be recognizing images but also to be studying the styles and techniques of the artists in order not only to replicate the images but rather replicate the artist. It is crucial to note that this process only worked because they had a massive dataset to train on. The algorithms and data processing only work if they have the data. And if things could not even become even more controversial, that German non-profit? It’s funded by the tech company Stability AI, which owns Stable Diffusion, which trains off of the LAION 5-B dataset. Chapter two is going to delve deeper into this, including the class action lawsuit; the other tech companies involved such as Midjourney and Deviant Art and others; the question on whether algorithms are “generating” art, stealing artists’ techniques and all the other issues and problems that have arisen with what one can see as the recklessness of releasing an AI tool such as this.

Chapter three is going to be delving into the present and ever-changing status of the AI language bots as Big Tech races each other to the proverbial gold mine. This chapter looks into the progress of the language model chats’ current cut throat competition. In the running: OpenAI’s ChatGPT; Microsoft’s Bing (or Sydney); Google’s Bard (the program formerly known as LaMDA), and there may be other up and coming players around but for this publication, this is plenty to discuss.

These chatbots have been the talk of the town and have produced reviews from the scientific community to schools, to ethicists, lingiuistics experts all the way to the media and general public, (to be clear, some of these programs are only available and being reviewed by the media and other invited experts, not the public at large) and have seemingly captured the imagination of people who have seen the movies with the sentient AI, the ones that can hold “intelligent” and “sentient” conversations with humans. The reviews have ranged from amazement to fear to some as saying that these bots are downright unworthy to be even be referred to as “intelligent” as it simply plagiarizes amorally. Some have also concluded from their review that one of the chat bots appears to be simply unhinged.

Chapter three is going to discuss these technological developments and present the reviews but before one gets too excited, the sentient and intelligent AI of the movies are not these chatbots and although some may claim that they are, those claims – are just that – claims. Sure, as any good scientific process, claims have to be tested and proven but many examples from reviewers have already shown that the language models while an impressive show of machine learning and the potentials of deep learning algorithms in large language models, are but, as of now, as many experts state, still have a long way to go, even to be deemed safe to be widely released. In fact, according to ethicists, and many others in the scientific community, engineers are not supposed to be aiming nor working towards making a machine sentient or human, but even if they were just focusing on making high functioning language models, neither should they be reckless in their arms race to this imaginary finish line as many unintended harmful consequences can happen along the way. The hubris of these developers must be held in check as history has very well shown too much hubris never ends well.

Furthermore, despite the advancements of image generation and language models, and other AI tools, the mythology that superintelligence is coming, is just that, a myth. With the technology we have seen so far, many experts express that these advancements are not even anywhere near the true level of Artificial Intelligence that the field has long defined and agreed on. The message is clear: Do not buy the hype of AI!

Also, although the publication only delves into these two examples of AI: text to image generation and large language models, this is not to say that the various other fields developing AI and automated systems are not important to discuss. These two examples were chosen because of their furious pace of development, at one would say, a reckless rate; the global attention they are receiving from the watchful, worried to the wildly enthusiastic and unrealistic to the curios; and the very real consequences and harms they have done and are doing and most worrisomely, the potential of even more harm coming. And although this is happening to these two examples, it does not mean that if precautions and lessons are not learned from this, that all these harmful consequences and even more dangerous ones, even if unintentional, the bottom line is that it can happen in any of the other various fields of AI and automated systems.

The fourth and final chapter of this publication will present, on a forward note, the various proposals on the table from civil society, the United Nations Educational, Scientific and Cultural Organization (UNESCO) and the governments of the United States and the European Union on how to address head on the consequences and harms that automated systems and Artificial Intelligence may bring with its advancements. The proposed UNESCO recommendations on a global agreement on the Ethics of AI, the US AI Bill of Rights, the proposed EU AI Liability Directive and the various proposals on principles to protect human rights from civil society around the globe will be presented and discussed and from there hopefully ensure that the proposed policies, regulations and protections of human rights develop in pace or even faster than the speed of the technological advancements of Artificial Intelligence and its systems. There is also now gaining momentum a demand from various organizations and personalities that a temporary moratorium be placed on the training of powerful AI systems. Part of the letter states “AI labs and independent experts should use this pause to jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts.”[4]

One thing for sure is that the race for the development of more and more advanced AI is here, in various forms, and as a consequence, enabling in an all mighty way, the abilities of the digital economy. What these various capabilities of these AI are doing at present or are being designed to do in the near future are at the center of this publication. Are these benign tools simply using algorithms to advance technology, the digital economy and beyond or are some of them being implemented at the cost of harm, ethics, bias, theft, racism, privacy and the violation of the rights of humans? Is there a recklessness being ignored in the name of speed or being the first to develop in this burgeoning “arms race” or “gold rush” in the highly competitive world of AI developers?

It is crucial to understand these developments in automated systems and AI because it is in knowing what these are capable of doing, both for good and in harmful ways, that one can then fully act upon the knowledge and take appropriate action to protect oneself or join others in clamoring for policies and protections. It is absolutely critical that civil society and the concerned public, ramp up the pressure on their governments for protections and policies that uphold and defend human rights. The UNESCO recommendations on the Ethics of AI, the US AI Bill of Rights, the EU AI Liability Directive and the many other proposals from civil society and already existing or proposed bills should not only be taking in input from those directly impacted and all those who want to contribute as it will be affecting many if not all. These bills and other policies should move with great urgency and should be hand in hand with a framework for legally implementing and enforcing them to ensure that these protections and guarantees do not remain on paper but rather become enforceable actions.

[1] Geiger, Gabriel, Schot, Evaline, Tromp, Reinier, Hijink, Marc, Davidson, David, Hekman, Ludo, Howden, Daniel, Aung, Htet, Constantaras, Eva. “Junk Science Unperpins Fraud Scores: Crude software in the Netherlands scored 100,000s of people on benefits, in first reconstruction of this kind, we reveal prejudice and randomness” Lighthouse Reports June 25, 2022

https://www.lighthousereports.nl/investigation/junk-science-underpins-fraud-scores/

[4] Future of Life Institute “Pause Giant AI Experiments: An Open Letter” March 22, 2023

https://futureoflife.org/open-letter/pause-giant-ai-experiments/

You must be logged in to post a comment.