Chapter Two of Amazing Artificial Intelligence*

Read the publication in full here

This chapter will go into the controversy around the AI tools being used to generate images from text, the issue of where those images were taken from, data laundering and the absolute lack of consent from billions of artists.

Before delving into this second chapter, it is important to note that this publication is only delving into two examples of what Artificial Intelligence can and is doing. These two examples: and AI tool that can generate images from text and and AI tool called Large Language Models were chosen for this publication because they are at the forefront, developing rapidly and maybe one would even say, recklessly.

Another crucial reason for choosing these two examples is to flag and illustrate that what harms that are happening in these two, may very well happen in the other many fields of AI that are already in use or are in various stages of development. These two examples are cautionary tales but not in theory, these consequences are very real, happening to real people in the real world.

This second chapter goes into not only one controversial issue but rather many with several interconnected but different questions that raise more questions. There also ranges from accusations of data laundering, violation of copyright, threatening the livelihoods of artists, designers, and those who work in these spheres. The legal questions of whether using academic nonprofit researchers to then circumvent copyright laws is not considered data laundering? Further issues discussed below, but one issue on the recklessness with which this was released because of the unintended consequences that came out, such as AI tools that made deep fakes and real faces of real people on fake defamatory and pornographic images easier to type and generate.

For this story to be appreciated fully, it needs to start at the very beginning. Before 2015, most everyone from academic research centers to technology laboratories, were participating in a decentralized process of identifying images with captions to facilitate the easier identification of such images. To clarify, the images were being tagged with the captions or key words, so in the metadata of the image, it could be identified. For example, a photo of a pedestrian lane would then be tagged “pedestrian lane”, so the computer looking for an image of a pedestrian lane, can easily find the tag and hence the photo. In 2015, researchers in a university in Toronto, had an idea. What if we could reverse the process? Could we design a program where one types the text and the computer generates the image? They tested it with a small test data and it worked, a blurry image, but the concept worked. The paper was published and shared with the rest of the scientific, academic and technology world.

This generated a lot of excitement and in January 2021, OpenAI created Dall-E and announced that it could generate images from text prompts. It even demonstrated better images with Dall-E 2. Google then made Imagen and also announced that it could create images from text input. However, OpenAI and Google were hesitant and erring on the side of caution, did not release the tools to the general public. There were concerns of possible unintended consequences and some felt it needed more time to test run things, so they gave limited access with safeguards. However, tech companies like Stability AI, which by the way was founded by a former hedge fund manager, and others, like Midjourney were impatient. They worked on the technology and Stability AI created Stable Diffusion, their own, open to the general public, filter free, version. “Stable Diffusion is a deep learning, text-to-image model released in 2022. It is primarily used to generate detailed images conditioned on text descriptions, though it can also be applied to other tasks such as inpainting, outpainting, and generating image-to-image translations guided by a text prompt.”[8]

But as mentioned earlier, these AI tools are only as good as how massive their dataset to train on is. And massive it is. Almost 6 billion images to be clear. As explained earlier in the introduction, and again to reiterate: “The Large-scale Artificial Intelligence Open Network (LAION), (a German) non-profit organization, provides datasets, tools and models to liberate machine learning research. By doing so, we encourage open public education and a more environment-friendly use of resources by reusing existing datasets and models.”[9] Through their non-profit status, LAION was able to generate LAION-5B: “a dataset of 5.85 billion CLIP-filtered image-text pairs, 14x bigger than LAION-400M, previously the biggest openly accessible image-text dataset in the world.”[10] In other words, in the name of academic research, LAION scraped the internet of almost 6 billion images with no consent, or option to opt in or opt out, because this scraping of images was ostensibly for nonprofit research. The presence of signatures, watermarks and labels on the images generated by Stable Diffusion shows that LAION scraped those images, complete with copyright label, signature and watermark.

The AI “art generator” called Stable Diffusion has trained on and accesses this massive data set to generate images from text. How are they able to access LAION-5B? Because Stability AI, which owns Stable Diffusion, paid for LAION-5B and fund LAION. And now Stability AI, Midjourney and Deviant Art (which also owns Dream Up) all use the AI tool Stability Diffusion which can produce high quality generated output because of the combined nearly 6 billion images worth that Stable Diffusion has trained on from LAION 5-B. Furthermore, the algorithms are specifically programmed to not only be recognizing images but also to be studying the styles and techniques of the artists in order not only to replicate the images but rather replicate the artist. One then just has to go to Stable Diffusion, key in the text prompts you want to have in your generated “artwork” or image and, it is advised to be as specific in the key words, and listing the actual artists whose style you want used. Things have exploded, in the last count, Stable Diffusion alone had 10 million users on a daily basis.

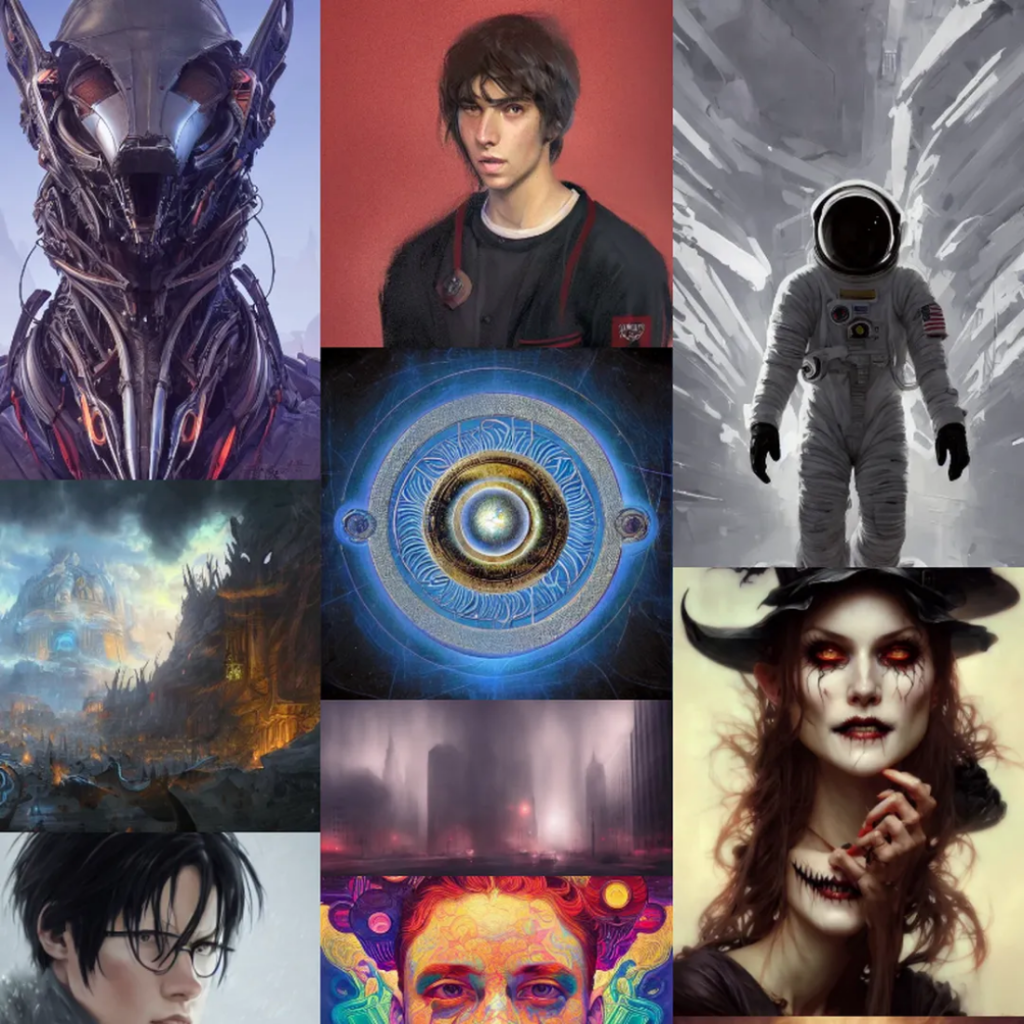

Here are some examples of what an AI text to image generator can produce:

The prompt was: “Stealing fire from the gods, illustrated by Stable Diffusion. (Exact prompt: “fantasy portrait of a hero stealing fire from the gods, digital painting, illustration, high quality, fantasy, style by jordan grimmer and greg rutkowski”) Image: James Vincent https://www.theverge.com/2022/9/15/23340673/ai-image-generation-stable-diffusion-explained-ethics-copyright-data

Stable Diffusion is notable for the quality of its output and its ability to reproduce and combine a range of styles, copyrighted imagery, and public gures. Top-left is “Mickey Mouse WW2 Propaganda poster,” and top-right is “Boris Johnson as 12th century peasant, oil painting.” Images: 1, 2, 3, 4 via Lexica https://www.theverge.com/2022/9/15/23340673/ai-image-generation- stable-diffusion-explained-ethics-copyright-data

A random selection of images created using AI text to image generator Stable Diffusion Image: The Verge via Lexica https://www.theverge.com/2022/9/15/23340673/ai-image-generation- stable-diffusion-explained-ethics-copyright-data

For some artists though, it has been a feeling of absolute violation for their artwork and name to be used to such a great extent and without absolutely their consent. Case in point, for Hollie Mengert, an artist who woke up to the nightmare of some person on the internet forum Reddit called “MysteryInc152” proudly posting about the work he had done using “DreamBooth. (a technique for introducing new subjects to a pretrained text-to-image diffusion model, training it with as little 3 to 5 images of a person, object, or style.)”[11] MysteryInc52 was very proud of his work: As he states, “2D illustration Styles are scarce on Stable Diffusion, so I created a DreamBooth model inspired by Hollie Mengert’s work.”

(Then) “Using 32 of her illustrations [12] , MysteryInc152 ne-tuned Stable Diffusion to recreate Hollie Mengert’s style. He then released the checkpoint under an open license for anyone to use. The model uses her name as the identifier for prompts: “illustration of a princess in the forest, holliemengert artstyle,” for example. [13]

See for yourself: The original artwork of the artist Hollie Mengert is the top and the images generated with Stable Diffusion Dreambooth (in Hollie’s style) is below

The artist Hollie Mengert, spoke to the author, Scott Baio and she was clear, “My initial reaction was that it felt invasive that my name was on this tool, I didn’t know anything about it and wasn’t asked about it,” she said. “If I had been asked if they could do this, I wouldn’t have said yes.”[14] She also had concerns that moving forward, with no control over these images but with her name still in the prompt that generates it, she has no way of telling clients or future potential clients that those images have nothing to do with her. It clearly is also her passion and livelihood, which, to be seen “fine-tuned and generated” by some stranger will obviously have some impact on her livelihood at some point in the future.

The researcher who interviewed Hollie Mengert, tracked down the “MysteryInc52” and interviewed him. MysteryInc152 is Ogbogu Kalu, a mechanical engineering student who hopes to make a series of comic books in a 2D comic book style.[15] He then continues that he was trying to find an AI generator that could produce a consistent 2D comic book style (realizing that this AI way would be faster than him taking the years to draw it himself) He says to Scott Baio that DreamBooth finally gave him the results he was looking for and he then in helping a friend on a Hollie Mengert project that a friend was doing, he then saw her artwork. “Before publishing his model, Ogbogu wasn’t familiar with Hollie Mengert’s work at all. He was helping another Stable Diffusion user on Reddit who was struggling to fine-tune a model on Hollie’s work and getting lackluster results. He refined the image training set, got to work, and published the results the following day. He told (Scott Baio) the training process took about 2.5 hours on a GPU at Vast.ai, and cost less than $2.”[16]

The author who had tracked him down had told him that Hollie Mengert, the artist, was unhappy and felt that it had been invasive and she would not have given her consent. Did he take it down or apologize, no, he felt that the tools were there and so were the images, and so it was inevitable that people were going to use it. He did add a disclaimer to say that Hollie Mengert had not been involved in his generated works.[17]

Very little comfort to the artist, if one were to imagine her years of hard work, skill and talent now just available to others without her consent.

A group of artists have set up a website for artists to find out if their artwork has been trained on by these AI text to image generators: http://haveibeentrained.com

This issue brings us to a number of interconnected cases:

1) The class action lawsuit brought forward by three artists: Sarah Andersen, Kelly Mckerman and Karla Ortiz, represented by The Joseph Saveri Law Firm LLP against Stability AI, Midjourney, and DeviantArt

2) The lawsuit filed by Getty Images represented by Weil, Gotshal & Manges LLP and Young Conaway Stargatt & Taylor LLP against Stability AI over Stable Diffusion

3) The data laundering that Stability AI did by paying LAION, a non-profit research to scrape the internet for images and thereby building the dataset LAION 5-B, knowing full well that it would be able to do so because of its non-profit research status. Furthermore, “The LAION-5B database is maintained by a charity in Germany, LAION, while the Stable Diffusion model — though funded and developed with input from Stability AI — is released under a license from the Computer Vision and Learning lab at Germany’s Ludwig Maximilian University (LMU) Munich university.”[18] This means that to sue Stable Diffusion, one has to sue the lab at Munich university?

The class action lawsuit has been presented against the three tech companies: Stability AI, Midjourney and DeviantArt (which also has its own AI art generator: Dream Up) – as the claim is that the three companies have been using the AI product Stability Diffusion that then generates text to image after being trained on a data set of more than 5 billion images that have been scraped from the internet without the consent of the original artists. The lawsuit alleges that in this process, these three tech companies have infringed on the rights of “millions of artists”. The three original artists who filed the lawsuit have called upon fellow artists to join them in the class action.

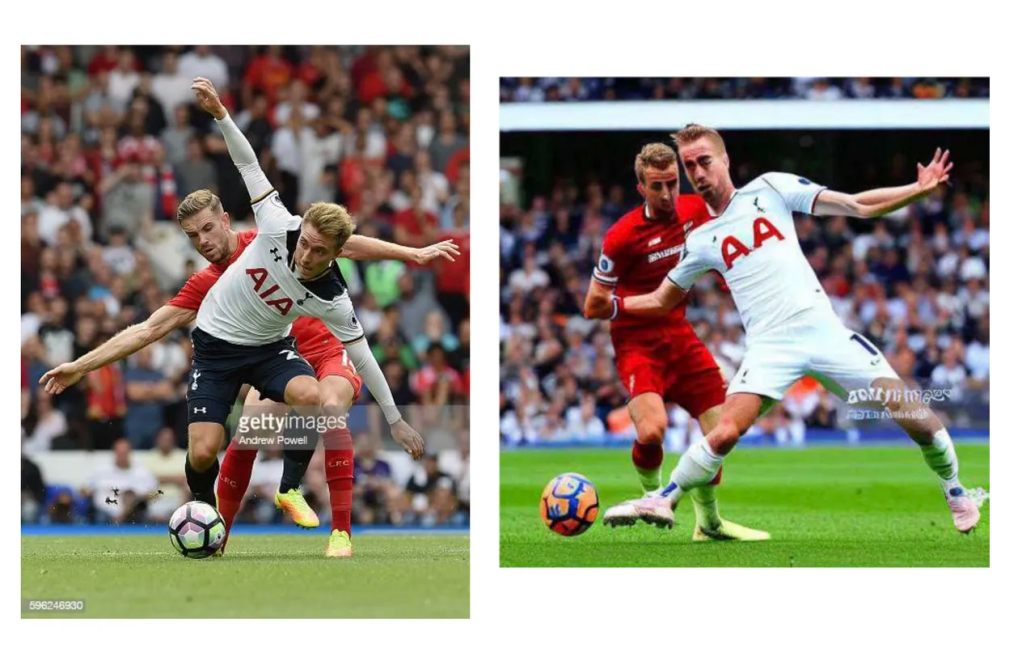

Soon after that lawsuit was filed, the company, Getty Images filed its own lawsuit against Stability AI over its AI art generator Stable Diffusion. “The stock photography company is accusing Stability AI of “brazen infringement of Getty Images’ intellectual property on a staggering scale.” It claims that Stability AI copied more than 12 million images from its database “without permission … or compensation … as part of its efforts to build a competing business,” and that the startup has infringed on both the company’s copyright and trademark protections.”[19] The output generated by Stability Diffusion when it involves an image from the getty images library, is pretty hard to hide as the image was scraped from the internet complete with the watermark of getty images. The ouput then is not only sometimes a distortion or lack of respect for the material but the faded watermark is also a brazen reminder that the image was taken without consent and this has been an instance of copyright infringement.

An illustration from Getty Images’ lawsuit, showing an original photograph and a similar image (complete with Getty Images watermark) generated by Stable Diffusion. Image: Getty Images

https://www.theverge.com/2023/2/6/23587393/ai-art-copyright-lawsuit-getty-images-stable-diffusion

Tech companies have tried to address the issue of deepfakes and public figures’ faces being placed on pornographic images by trying to remove them from the sites or the dataset, but these are one of those unintended consequences caused by recklessness. Although the CEO of Stability AI vehemently denies they have been anything but responsible.

Rubbing salt to the wounds of artists whose livelihoods are in peril though, Stability AI is rumored to be poised to make millions if not in the tens of millions with their AI product. Of course making money was always part of it, “Mostaque himself is a former hedge fund manager who’s contributed an unknown (but seemingly significant sum) to bankroll the creation of Stable Diffusion. He’s given slightly varying estimates as to the initial cost of the project, but they tend to hover at around $600,000 to $750,000. It’s a lot of money — well outside the reach of most academic institutions — but a tiny sum compared with the imagined value of the end product. And Mostaque is clear that he wants Stability AI to make a lot of money while sticking to its open source ethos, pointing to open source unicorns in the database market as a comparison.”[20]

In the meantime, that the tech companies are raking in the profits, there are real human beings – living artists – who are getting trampled on by these tech companies in their race for being the best AI text to image generators. The data scraping and laundering through non-profit groups, has been so disingenuous if not straight up dishonest that should it not be made illegal? The lawsuits going forward will show where the legal system stands on this and if there will be at the very least, respect shown by these tech corporations.

[8] https://en.wikipedia.org/wiki/Stable_Diffusion#cite_note-:0-3 : “Diffuse The Rest – a Hugging Face Space by huggingface”. huggingface.co. Archived from the original on 2022-09-05. Retrieved 2022-09-05.

[11] Baio, Andy “Invasive Diffusion: How one unwilling illustrator found herself turned into an AI model” Waxy November 1, 2022

https://waxy.org/2022/11/invasive-diffusion-how-one-unwilling-illustrator-found-herself-turned-into-an-ai-model/

[12] 32 of her illustrations can be found at https://imgur.com/a/8YRCGsW

[13] Baio, Andy “Invasive Diffusion: How one unwilling illustrator found herself turned into an AI model” Waxy November 1, 2022 https://waxy.org/2022/11/invasive-diffusion-how-one-unwilling-illustrator-found-herself-turned-into-an-ai-model/

[14] Baio, Andy “Invasive Diffusion: How one unwilling illustrator found herself turned into an AI model” Waxy November 1, 2022 https://waxy.org/2022/11/invasive-diffusion-how-one-unwilling-illustrator-found-herself-turned-into-an-ai-model/

[15] Baio, Andy “Invasive Diffusion: How one unwilling illustrator found herself turned into an AI model” Waxy November 1, 2022 https://waxy.org/2022/11/invasive-diffusion-how-one-unwilling-illustrator-found-herself-turned-into-an-ai-model/

[16] Baio, Andy “Invasive Diffusion: How one unwilling illustrator found herself turned into an AI model” Waxy November 1, 2022 https://waxy.org/2022/11/invasive-diffusion-how-one-unwilling-illustrator-found-herself-turned-into-an-ai-model/

[17] Baio, Andy “Invasive Diffusion: How one unwilling illustrator found herself turned into an AI model” Waxy November 1, 2022 https://waxy.org/2022/11/invasive-diffusion-how-one-unwilling-illustrator-found-herself-turned-into-an-ai-model/

[18] Vincent, James “Anyone can use this AI art generator — that’s the riskStable Diffusion is a text-to-image AI that’s much more accessible than its predecessors” The Verge September 15, 2022

https://www.theverge.com/2022/9/15/23340673/ai-image-generation-stable-diffusion-explained-ethics-copyright-data

[19] Vincent, James. “Getty Images sues AI art generator Stable Diffusion in the US for copyright infringement” The Verge February 6, 2023

https://www.theverge.com/2023/2/6/23587393/ai-art-copyright-lawsuit-getty-images-stable-diffusion

[20] Vincent, James “Anyone can use this AI art generator — that’s the risk Stable Diffusion is a text-to-image AI that’s much more accessible than its predecessors” The Verge September 15, 2022

https://www.theverge.com/2022/9/15/23340673/ai-image-generation-stable-diffusion-explained-ethics-copyright-data

One thought on “The controversy of AI art theft”

Comments are closed.